The EU AI Act establishes the first comprehensive regulatory framework for Artificial Intelligence within the European Union. Organizations must assess whether their AI systems fall within the scope of the regulation and determine which specific obligations apply — particularly regarding risk classification, transparency, documentation, and governance structures.

This session focuses on the classification of AI systems under the Act’s risk-based approach, obligations for high-risk AI systems, documentation and monitoring requirements, and the interaction with existing compliance frameworks such as the GDPR. We will also address internal roles, responsibilities, and oversight mechanisms required for compliant AI deployment.

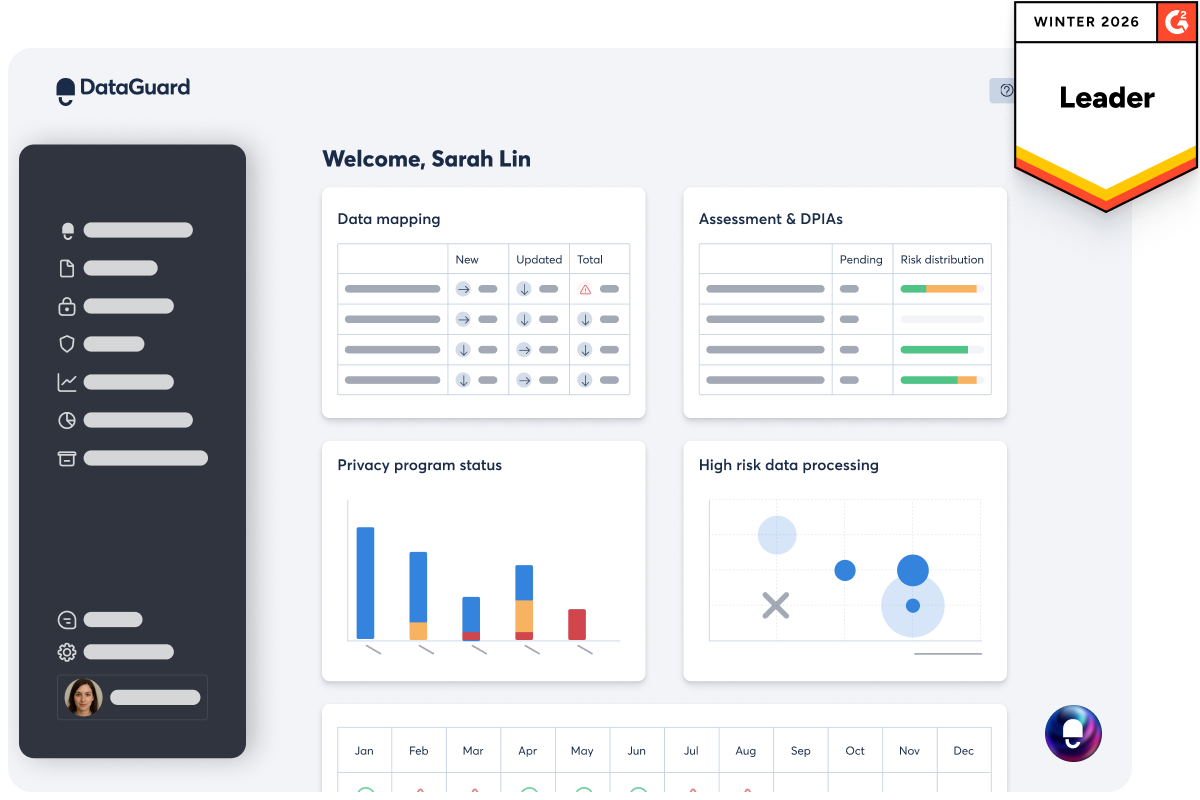

In addition, we will demonstrate how the DataGuard platform can support organizations in operationalizing EU AI Act requirements — from structured risk assessments and documentation workflows to governance tracking and audit readiness.

Early engagement with the EU AI Act enhances legal certainty, reduces regulatory exposure, and enables a responsible and strategically aligned use of AI technologies.

Why attend?

- Gain a clear overview of the structure and scope of the EU AI Act

- Understand the risk classification framework for AI systems

- Identify obligations for high-risk AI systems

- Recognize intersections with GDPR and existing compliance processes

- See how the DataGuard platform can support AI Act compliance in practice

- Reduce liability and regulatory risks